Stop Giving AI Agents Your API Keys

Every API key you give an AI agent is an attack surface. A local reverse proxy keeps your credentials safe while agents get full API access.

Practical security insights and product updates from the team building safer, simpler key management for modern APIs.

Every API key you give an AI agent is an attack surface. A local reverse proxy keeps your credentials safe while agents get full API access.

Every AI security layer has holes. The swiss cheese model shows why stacking imperfect defenses is the only strategy that works for AI agent pipelines.

Multi-agent AI pipelines have a supply chain problem. See 4 real attack patterns, including MCP skill trojans and orchestrator trust exploitation, plus 5 code-level defenses.

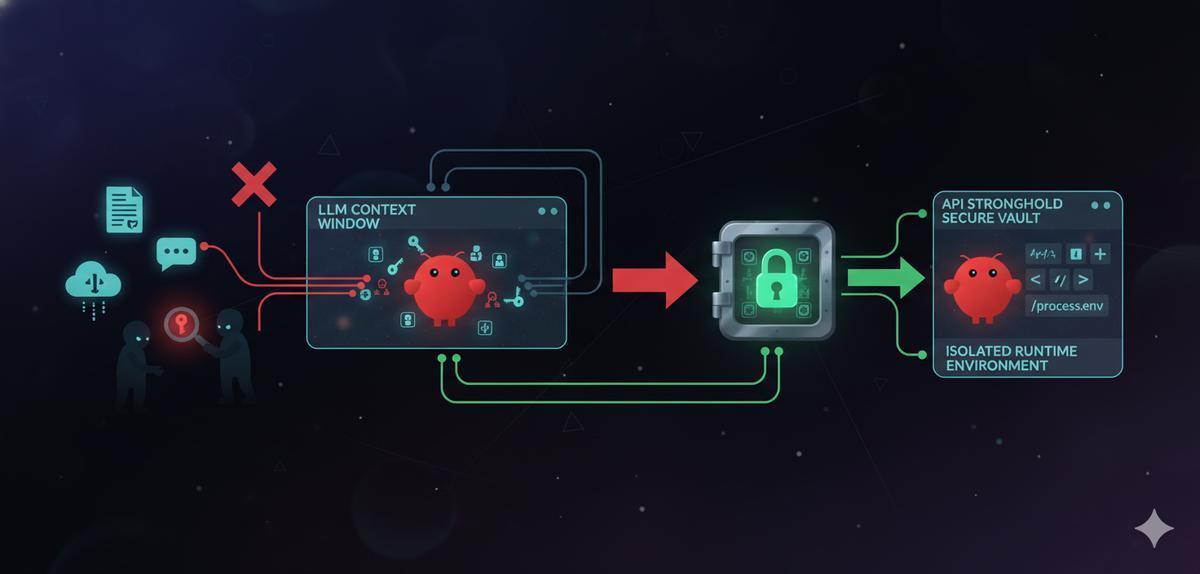

10 documented prompt injection attacks that hit production AI systems, plus 5 concrete defense steps with code you can copy. Each attack shows exactly how credentials get stolen.

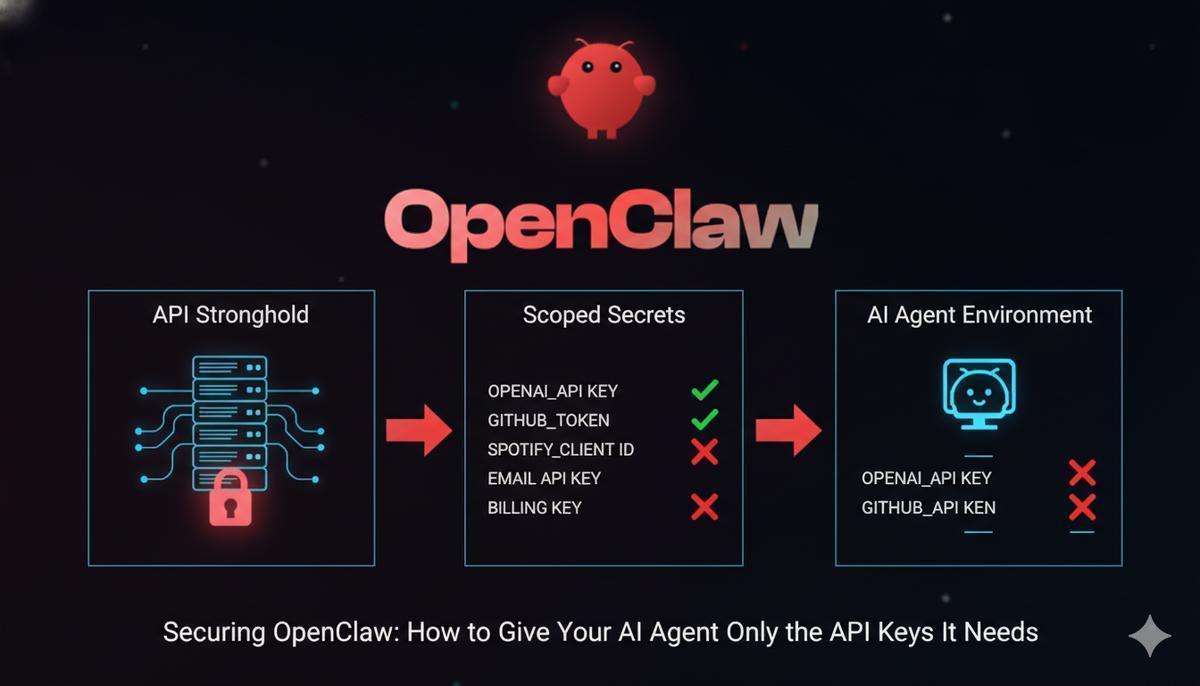

Security researchers found 21,000 exposed OpenClaw instances in two weeks. Here's why agent tokens leak and how scoped secrets contain the damage.

135,000 exposed OpenClaw instances, 824+ malicious skills, and a CVSS 8.8 RCE in 2026. Here's what went wrong and how to stop your API keys from being the next casualty.

Crypto AI agents execute trades at machine speed with no human confirmation. When the API key leaks, the damage happens in minutes. Here's how to scope credentials so a theft can't drain your account.

7% of OpenClaw skills expose API keys through the LLM context window. Isolate your credentials with scoped secrets so keys never touch the model.

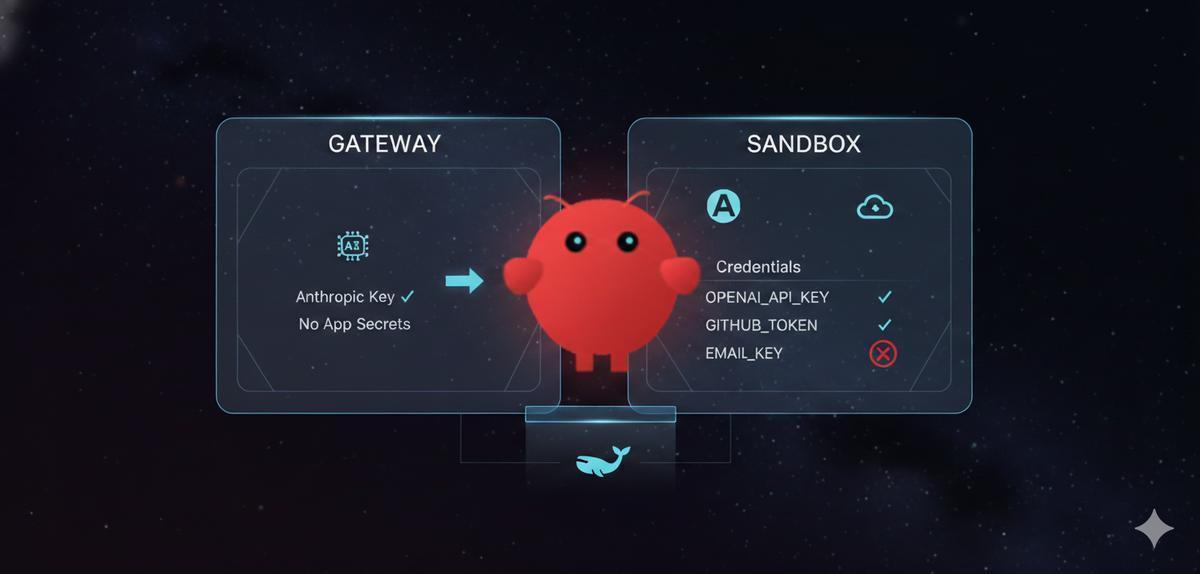

Run OpenClaw in Docker with scoped, expiring API keys that never leak through .env files. Step-by-step: container setup, proxy config, and scoped secrets in under 10 minutes.

OpenClaw agents hold every key in your .env. Prompt injection can use all of them. Here's how to run OpenClaw with scoped, zero-knowledge encrypted secrets so a compromised session can only reach what it needs.